Democracy vs Democratize

This week's newsletter is a brief look at AI and democracy.

Mad Science Solves...

I recently heard a speaker call for AI to be “democratized”. A quick Google search reveals 2 definitions for democratize:

- introduce a democratic system or democratic principles to

- make (something) accessible to everyone

When this word is used in public discourse, particularly concerning technology and science, it calls on an aspirational association with the first definition but only truly makes contact with the latter (and even then, only minimally).

For me, “democratize” has become a dirty word, a term hucksters use to distract from their true goals (and zeitgeisters use to seem informed). Its users want us to think, “democracy”, while many of them are actually thinking, “market penetration”. The missing third definition is

- Allow anyone to do anything with (especially me)

Democracy is a political mechanism for collective action. It is a means for us to decide together on a shared future. Democratization, by this third definition, is only about individuals and their power to do whatever they want. This isn’t what most speakers are attempting to invoke with the word. They are usually talking about access and wrapping it in democratic ideals. “Let’s democratize AI,” absolutely implies a collective good and a call to break monopolies, just as “let’s democratize journalism and politics with social networks,” implied a decade ago. That was the future we bought, but it isn’t the world we got.

If we in the United States took democratic action on gun control, we would collectively decide shared policies on ownership and use. Guns wouldn’t be outlawed, but I suspect that their use would be significantly restricted compared to America today. Some would be unhappy with the results (in both directions), but we would have decided together. Instead, our history has democratized gun ownership. Instead of a collective decision and shared acceptance, burly dudes can hang out at polling stations carrying rifles with bump stocks. Do we want AI to follow the same path as social media and “gun rights”?

Access is laudable, but democratization has become a bait-and-switch: invoking democracy and access, while actually dismantling collective action. AI needs democracy, not democratization.

Stage & Screen

A testimonial from Dr. Ming's recent talk for Physicians:

"I want to express my sincere gratitude for your insightful plenary session at our conference on “The Opportunities and Limitations of AI”. Your expertise and thought-provoking presentation shed light on the crucial role AI plays in shaping the future of healthcare and education.

Your ability to articulate the complexities of AI in healthcare and education was truly impressive, providing valuable insights into both the opportunities and limitations. Your dedication to advancing these fields is inspiring, and your vision for a more inclusive and efficient healthcare system resonated deeply.

Thank you for sharing your knowledge, expertise, and passion with us. Your contributions have undoubtedly enriched the conference, and I look forward to seeing the positive impact of your work on the future of healthcare and education."

Kind regards,

Dr Collings

2024 is here and with engagements already secured in Paris, Stockholm, DC, Toronto, and more!

I would love to give a talk just for your organization on any topic: AI, neurotech, education, the Future of Creativity, the Neuroscience of Trust, The Tax on Being Different ...why I'm such a charming weirdo. If you have events, opportunities, or would be interested in hosting a dinner or other event, please reach out to my team below. - Vivienne

Research Roundup

AI Can Help…If You Understand People

Years ago, I worked with my wife on an NLP tool to improve the value of online discussion forums (fora? That sounds like something that’s kept healthy by a yogurt enema) in university. We found that our model could detect when discussions were going off the rails and losing their pedagogical value. Importantly, we also found that faculty moderators had only a very narrow time window (~10-15 min) in which to intervene to save the discussion. Even then, they had to enter the discussion where the students had taken it and only then lead the conversation back to where they wanted it to be.

It seems that political conversations follow similar principles. A recent study developed an LLM “to make real-time, evidence-based recommendations intended to improve participants’ perception of feeling understood.” Deployed among many participants across political topics, the tool appeared to “improve reported conversation quality, promote democratic reciprocity, and improve the tone” of the discussions. This is quite heartening and is certainly a prosocial use for AI.

I was troubled, however, by an additional observation of the authors. The LLM did not change “the content of the conversation” or move “people’s policy attitudes.” I’m not so foolish as to think everyone would magically agree if only AI presented the facts, but if AI-based interventions improve the feel of political discourse but not the substance, I’m a bit worried that it might be helping us avoid hard conversations rather than supporting productive friction.

Good Intentions make Bad Policy

I’ve been asked to help on half a dozen projects focused on dis- and misinformation online and interviewed another dozen times on the same subject. Every one of those projects was invested in a presumed mechanism for change: if people just had the opportunity to discover the “truth” they would be resistant to the lies. Unfortunately, good intentions make bad policy.

A recent paper explored the “conventional wisdom…that searching online when evaluating misinformation would reduce belief in it”. Instead, the evidence found the exact opposite. When people are able to search online to evaluate the “truthfulness of false news articles”, their searches “actually increase the probability of believing” the misinformation.

Fortunately, this effect wasn’t universal. It was largely “concentrated among individuals for whom search engines return lower-quality information”. Bizarrely, people are more likely to believe true stories supported by “low-quality sources” but not when they’re supported by “mainstream sources”.

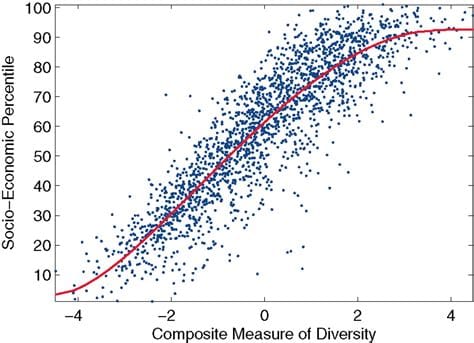

In essence, these individuals aren’t looking for evidence to challenge their beliefs, they are looking for affirmation of their convictions. In this, they are like every business leader who ever bragged to me about running a data-driven company, only for the inevitable reveal that they use the data to justify the policies they’d already selected. If we want to build better tools for citizenship, we must stop assuming human beings make choices like perfectly rational agents.

| Follow more of my work at | |

|---|---|

| Socos Labs | The Human Trust |

| Dionysus Health | Optoceutics |

| RFK Human Rights | GenderCool |

| Crisis Venture Studios | Inclusion Impact Index |

| Neurotech Collider Hub at UC Berkeley |